Comparing LLMs for Custom Agents

Last updated: April 2, 2025

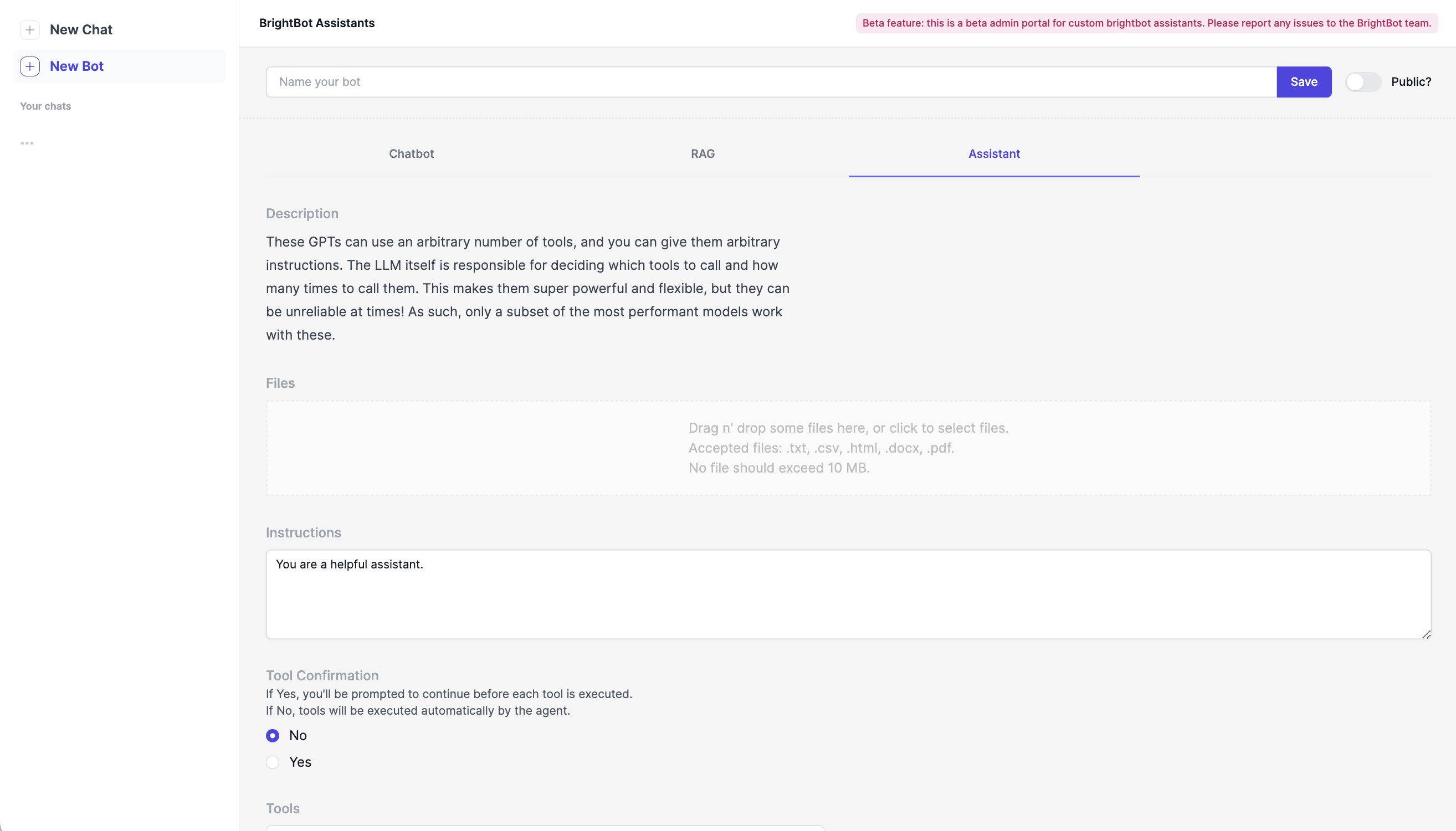

View of BrightBot Assistants Admin Portal.

Brighthive allows the choice between a variety of different Large Language Models (or LLMs) when creating BrightBot Assistants. This can be a powerful tool, but can also be confusing based on the constant improvements in the LLM market.

To better understand the strengths and weaknesses of each LLM, benchmarking guides can be a great resource. Benchmarking refers to a set of standardized testing to understand a model's specific skills. Read more about benchmarking at how it works with this guide to LLM Benchmarking from IBM.

Benchmarks are released regularly by a variety of companies and individual tech enthusiasts. A few benchmarks that our team have found helpful include the HuggingFace Benchmark Collection (which updates regularly) and the Vellum LLM Benchmarks (updated September 2024). These are just a starting point, and we encourage users to explore the constantly evolving network of LLM benchmarks that are released almost as often as these models are updated.

Ultimately, each model will instruct the bot differently, and changes are constantly being pushed to these publicly available tools. If you aren't seeing consistent answers with one LLM, switching to another could an easy way to adjust performance. When in doubt, test it out!